20 Apr AI governance: how to safely manage risks and scale innovation

THE artificial intelligence is no longer a future promise but a reality in business operations. But this transformation brings with it a dilemma about how to balance innovation with security?

Companies that implement AI without proper governance face operational, reputational and regulatory risks that can jeopardize years of brand building and trust.

THE AI governance should not be seen as an obstacle, but rather as the foundation for scaling innovation in a sustainable and responsible way.

Follow this article to understand the importance of this process for your company.

What is the governance of artificial intelligence?

THE AI governance is the structured set of processes, standards and guidelines that ensure the development and operation of IT systems. artificial intelligence in a safe, ethical and transparent manner.

In other words establish supervision mechanisms that deal with risks such as algorithmic bias, privacy violations and misuse, while promoting innovation and building trust with stakeholders.

An effective governance structure rests on four fundamental pillars:

- Transparency: responsible disclosure about the functioning of systems, including information about databases and inference processes, allowing auditability and building public trust;

- Fairness: monitoring and mitigation of discriminatory biases to prevent social exclusion and promote algorithmic fairness in all automated decisions;

- Reliability: ensuring that systems function properly even under adverse conditions, with technical robustness and cyber protection to prevent physical, virtual or systemic damage;

- Responsibility: clear attribution of legal and ethical accountability, ensuring that AI actors are held responsible for the proper functioning of systems throughout the technology's lifecycle.

What are the main risks of ungoverned AI?

The absence of governance transforms AI from a strategic tool into a business risk. Three specific phenomena help illustrate this danger.

- Shadow AIThis occurs when employees use AI tools that are not authorized by the organization, creating loopholes for security and compliance. Research shows that up to 80% of business leaders see the explainability, ethics and bias of AI as significant obstacles to adoption, but many still underestimate the internal risks.

- Hallucinations of modelsWhen AI generates incorrect information that is presented with confidence, it can jeopardize strategic decisions and credibility with stakeholders. clients and partners.

- Leakage of sensitive information: in public generative AI tools poses a direct risk to intellectual property and regulatory compliance.

What are the main regulatory acts for AI governance?

The global regulatory landscape is in full transformation. Two legal frameworks require special attention from business leaders in Brazil: the EU AI Act (Europe) and PL 2338/2023 (Brazil), which evolved into the PL 6237/2025.

EU AI Act

Considered the world's first comprehensive regulatory framework for AI, it adopts a risk-based approach. High-risk systems face strict governance, risk management and transparency requirements, while uses considered to be of unacceptable risk are completely prohibited. The penalties of up to EUR 35 million or 7% of annual worldwide turnover.

PL 6237/2025

It establishes guidelines for the development and application of AI systems, with an emphasis on the protection of fundamental rights, transparency and accountability. The proposal provides for classification of systems by risk level and requires governance measures commensurate with the criticality of the applications.

Finally, companies need to understand that regulatory compliance in AI is not just a legal issue, it also involves a strong competitive edge. Organizations that establish proactive governance build trust with clients, investors and regulators.

How to overcome the challenges of implementing AI governance?

The implementation of AI governance faces two central obstaclesintegration with legacy systems and building a data-driven culture.

For example, in the context of companies operating with ERPs, such as Oracle JD Edwards, In addition, we need to ensure that AI is integrated securely and in a way that is compatible with the existing infrastructure.

Data culture is equally critical. More than just training technical leaders, business managers need to be trained to understand the capabilities, limitations and ethical implications of AI.

One Gartner report indicates that by 2030, around 50% of the failures in the implementation of AI agents will be related to insufficient governance policies.

How MPL Inova help your company with AI governance?

With over 40 years in the market, the MPL has consolidated its expertise in integrating advanced technologies into complex business ecosystems. The unit MPL Inova, recognized as the winner of the LATAM Prize for Innovation with AI, acts as a strategic advisor for companies seeking to implement artificial intelligence with governance, compliance and tangible returns.

With an approach focused on strategic AI consulting, Our experts support leaders in creating a roadmap that prioritizes high-value projects, ensuring that each solution, whether it's a service agent or a complex automation, is born under a framework of compliance and compliance. security.

Thus, in development of AI agents, are built solutions customized, compatible with the guidelines established for the protection and compliance of the data used.

Unlike purely experimental approaches, the MPL Inova carries the DNA of those who understand how to work in sensitive environments, combining the the agility of disruptive technologies to the robustness needed to integrate AI to business processes and ERPs, so that innovation is scalable but, above all, stable.

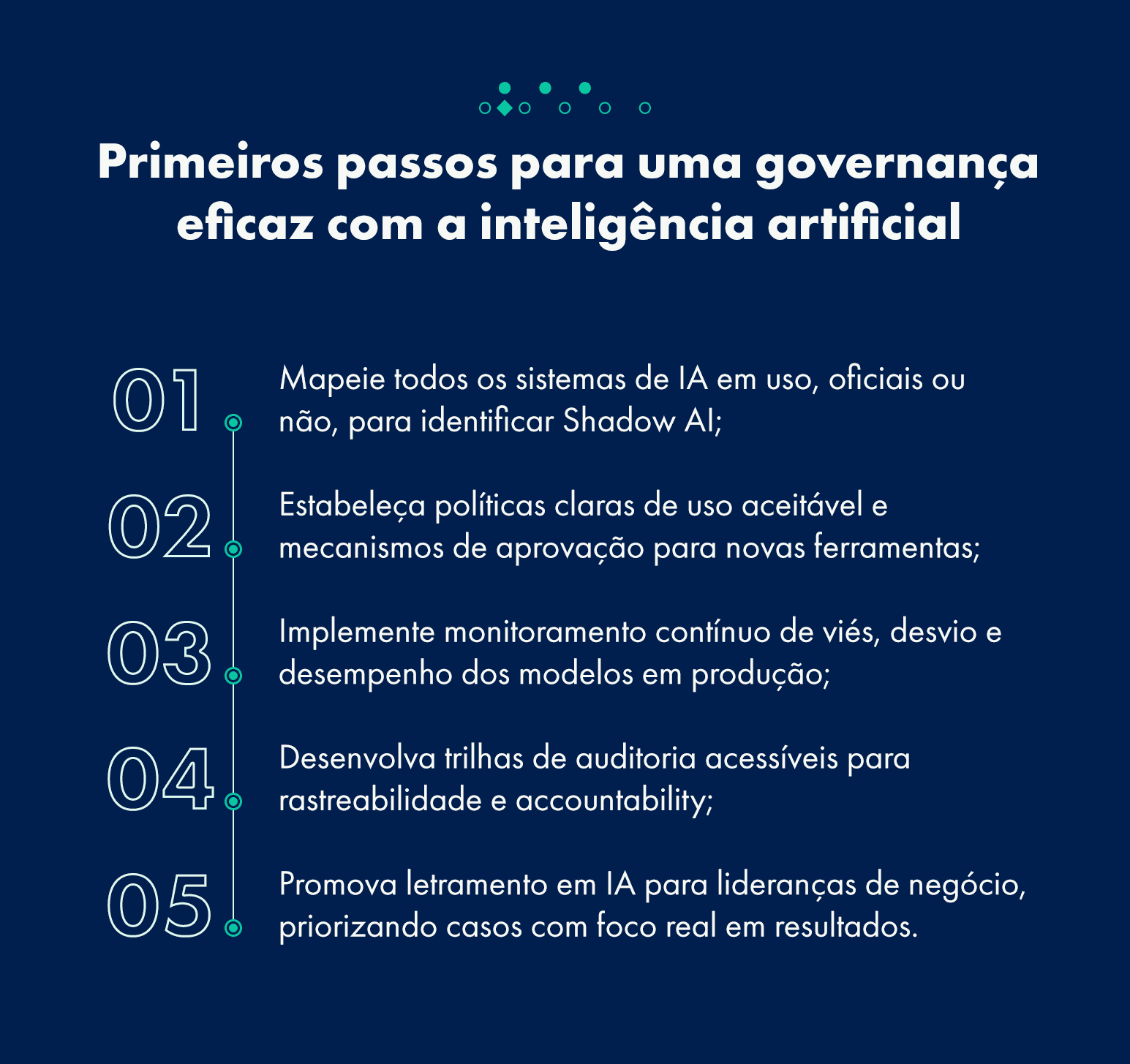

What's the next step for effective AI governance?

AI governance is a continuous process of adaptation and improvement. Companies that establish robust governance structures from the outset gain sustainable competitive advantage, as they build trust with stakeholders, reduce regulatory risks and create solid foundations for scaling innovation.

The regulatory landscape is consolidating, both globally and in Brazil. Organizations that anticipate these movements, implementing proactive rather than reactive governance, will be prepared not only to meet legal requirements, but to turn compliance into a strategic differentiator.

That's why the foundation for sustainable growth with AI lies in governance. It's not about putting the brakes on innovation, but ensuring that it is built on solid foundations of ethics, transparency and accountability.

Is your company ready for this new era of AI? Talk to the experts at MPL Inova and structure your governance now.

Frequently asked questions about AI governance

What is AI governance in practice?

It is the set of guidelines and processes that ensures that the artificial intelligence is used ethically, safely and transparently, aligning technological innovation with the company's objectives and standards.

What are the biggest risks of ignoring governance?

The main risks include the leakage of confidential data (Shadow AI), automatic decisions with discriminatory biases, regulatory compliance failures and irreversible damage to brand reputation.

How the regulations for artificial intelligence can impact my company?

The laws require companies to be transparent about their use of algorithms, to carry out impact assessments and to ensure that security data, providing for severe sanctions for systems considered to be high risk.

Can AI governance slow down innovation?

On the contrary. Well-structured governance provides a secure path for the company to scale AI projects without being interrupted by AI crises. security or legal issues.

Sorry, the comment form is closed at this time.